Predicting a sound system’s performance is a complex science involving a lot of variables, some more accurate than others. Maximum loudspeaker output is typically measured using pink noise, which contains all frequencies at equal energy levels for each octave. But while pink noise replicates music’s spectral breadth, it falls short of modeling its dynamic nuance and unpredictability.

Meyer Sound hopes to close this gap with the introduction of M-Noise, a mathematically derived test signal engineered to better emulate the complexity of music. The company has made the signal available as a free download, and outlines best practices for measurement. I sat down with Pablo Espinosa, Meyer's Vice President and Chief Loudspeaker Designer, to find out how promoting an accurate, verifiable reference standard can lead to greater confidence in system design, measurement, and performance.

Sarah Jones: What led Meyer to develop M‑Noise?

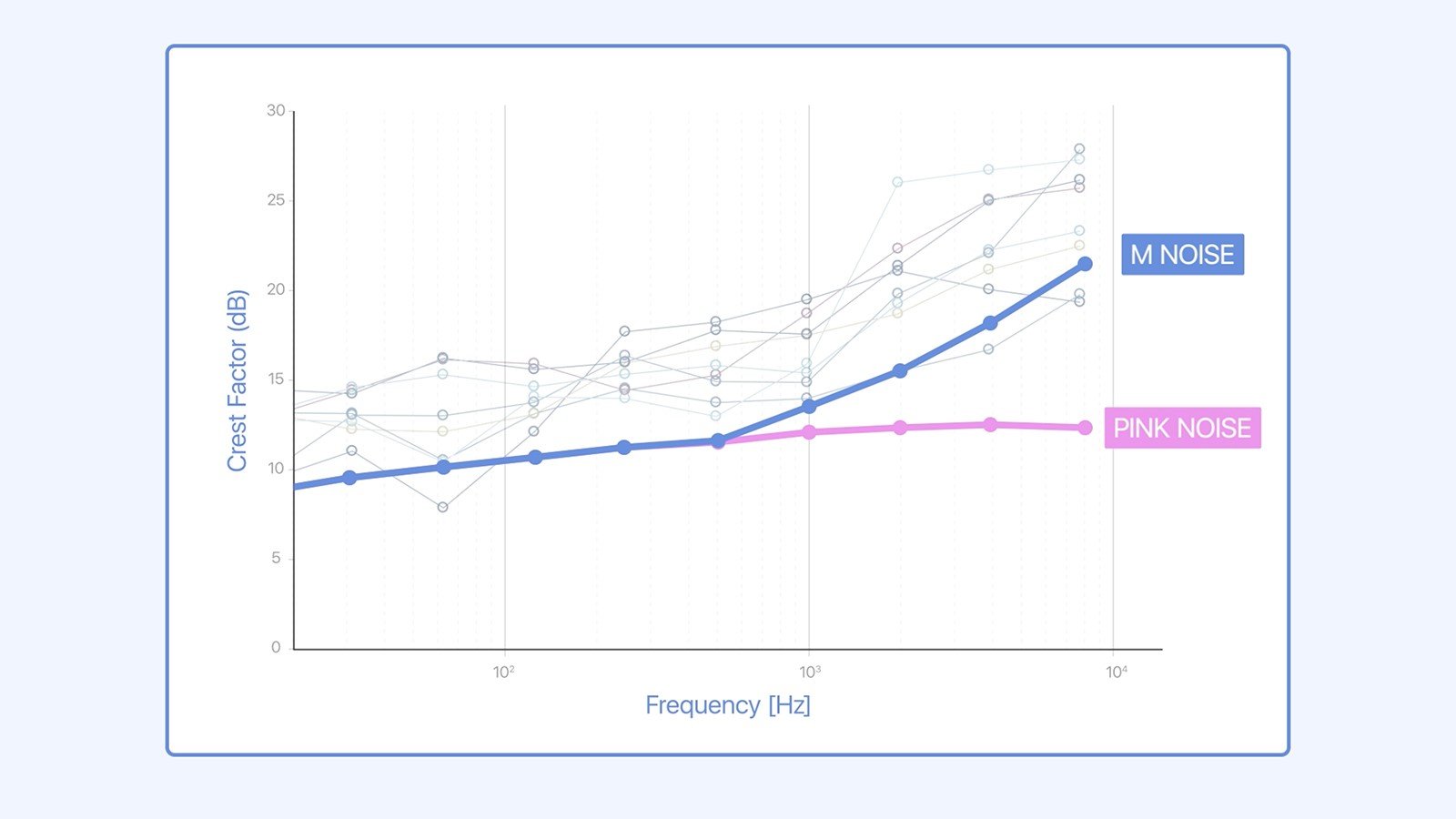

Pablo Espinosa: A number of instances happened in a short time that pulled John Meyer's attention to this problem. People would be in an arena before a show, trying to measure the max peak SPL of a system with pink noise, and they wouldn't get the results they were expecting. Pink noise doesn't behave like music because its crest factor—the difference between peak and average levels—is nearly the same at all frequencies.

That was creating a bit of stress for the engineer who wanted to just do a quick check before the concert—he didn't get the numbers he wanted to see, but once the band started playing he got a few dB more. A similar situation has happened with cinema specs, where the crest factor of pink noise is not enough to measure the crest factor needed.

M-Noise exhibts a crest factor that rises with frequency, versus pink noise's equal energy at each octave.

The other thing that started getting us thinking is that as an industry, we really don't have a standard. It’s the obligation of the manufacturer to provide accurate data. We cannot be inaccurate about, for example, the weight of the speaker, because that can create safety problems. We also observed that the numbers are all over the place, and that could create a problem for users and consultants where the speaker is not going to really perform as the data sheet says it will.

At the end of the day, most live sound applications are reproducing music, and we want to provide an accurate statement about how this speaker is going to linearly reproduce peaks in music. But obviously, we're never going to agree on which music to use as a reference, so we started looking at the difference between music and pink noise.

SJ: When you looked at the signals, was crest factor the point of departure?

PE: Yes. We were specifically looking for the crest factor because when we start having discussions about why we can get higher peaks with music, it’s the crest factor.

From the first approach, we knew that pink noise is random, like music. Music doesn't behave like that, but at the end of the day, it contains all the frequencies. Even the 18 dB broadband crest factor that we picked for M‑Noise, unlike the 12 dB broadband crest factor for pink noise, is still kind of a conservative number, but we thought that going 6 dB more than pink noise in the crest factor would be enough to create a signal that is more useful than pink noise.

SJ: Is there a way to quantify the difference in accuracy between pink noise and M‑Noise?

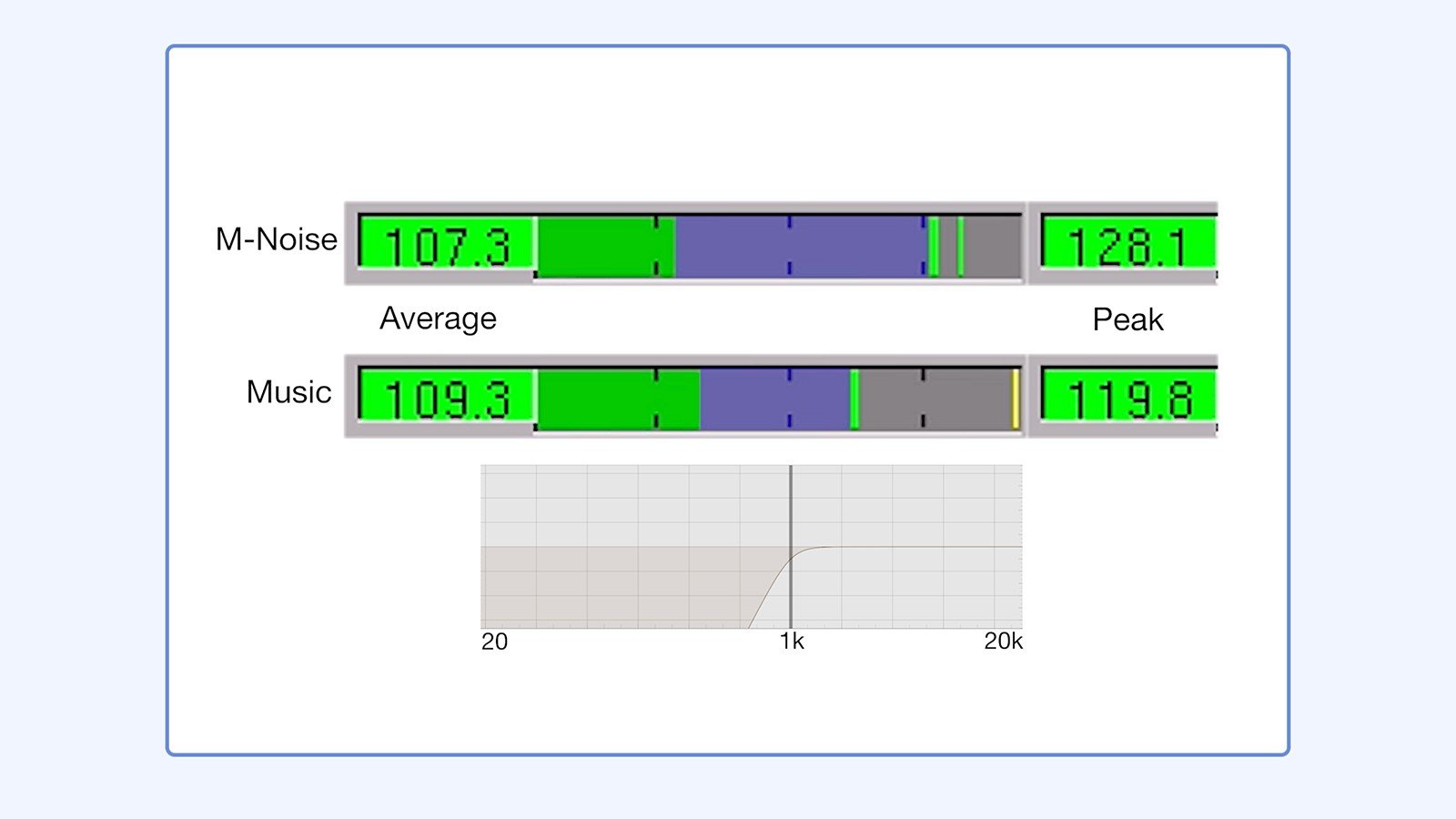

PE: Yes. We decided that a single number can’t really tell the whole picture. So what we're doing in our data sheets is, we're publishing the linear peak number, when the speaker is not under stress conditions. It's not when the speaker is about to blow, it’s how the speaker should actually be used.

So we decided that we will use three signals in our data sheets. We continue using pink noise and B‑Noise, which is pink noise that has been filtered using the “B” weighting curve contour to emulate band-limited signals like speech; and we have added M‑Noise for the sake of a better explanation. Just to get real extremes of operation. Then we are publishing those numbers using the same procedure that preserves linearity. The other thing that’s important is the coherence trace of a dual‑channel FFT analyzer which indicates the similarity between the signal we measure and the reference. If there’s contamination like distortion or noise, it will show up in the coherence trace.

You need to be mindful of different design specifications. One is linearity, the other one is coherence. So it’s not just the test signal what we’re proposing, but it’s an entire method which reveals that the loudspeakers are still behaving in a linear way, distortion is acceptable, and the frequency response is still acceptable as well and not with excessive compression or limiting.

Crest factors of M-Noise and music, compared.

SJ: This is all pretty accessible technology. Why do you think we've settled for a pink noise standard for so long?

PE: We all continue to learn things. I don't think we ever really thought about the difference between pink noise and music; we were just settled that pink noise is a good test-measurement signal because of its full‑range frequency content. But then we start measuring SPL, and we start seeing big differences between pink noise and music, which was part of what prompted this situation.

The other part of the question is, what is the methodology and the process to measure, and when do you stop? Because real‑time analyzers and SPL meters don't care about distortion. You can drive a speaker as hard as you can, it can be completely distorted, and the SPL meter is still going to give you a nice, big number. You’d never use it like that in a concert, right?

SJ: Not a concert I want to go to.

PE: Exactly. So that's when we started thinking, "Where do you stop in order to be realistic?" Because this number has to be realistic in order to be useful. It's not useful to let's say, how fast can my car go? Well, it can go as fast as 500 kilometers per hour if I drop it from a plane. Somebody could stay on the ground with a radar speed gun and say, "Hey, I did measure it." He's telling the truth, but this is just not useful. It's not how the car is going to be used.

We're very conscious about the responsibility and obligation we have as a manufacturer, and we're trying to improve the data we provide to our users. They're the ones out there in the trenches, and we don't want them to fail because we didn't give them accurate data.

When they plug their designs into our prediction program, the design is going to be accurate. They can measure it and verify it. That is part of what Meyer has always wanted to do: We have our MAPP XT System Design Tool and it’s very accurate, and the speakers that we ship are very consistent; now if you want to verify those measurements you can actually do it, and the results are going to match.

SJ: Is your hope that this becomes an industrywide standard, that published specs ultimately include M‑Noise?

PE: That would be the hope. I think as an industry we've been getting kind of nonsense rates, publishing the biggest numbers possible. It’s not the customer’s job to police the manufacturers. So we are proposing at least one method that lets people reproduce what the data sheet says. Because in the past, a lot of that has been very obscure. So hopefully the other loudspeaker manufacturers start looking into this and say, "Hey, yeah, that kind of makes sense."

SJ: It seems like it would only elevate the industry to rely on the most objective specs.

PE: Obviously, I have a lot of respect for John Meyer; he’s always looking to point out that we need to come together as an industry, because every time someone fails in the industry, the whole industry fails. When somebody does a bad job in a performance venue, we all look bad. It’s just not that brand or that person or that consultant.

It will be interesting how it develops. It will obviously be polished and the tools will be more available and we’ll have better tools and people will start understanding things that they haven’t been paying attention to, like coherence in a dual‑channel FFT analyzer.

I think what I love about this industry, is the learning is neverending. There's always new things to learn, and ways to be more precise and more accurate, and develop better tools.

Sarah Jones is a writer, editor, and content producer with more than 20 years' experience in pro audio, including as editor-in-chief of three leading audio magazines: Mix, EQ, and Electronic Musician. She is a lifelong musician and committed to arts advocacy and learning, including acting as education chair of the San Francisco chapter of the Recording Academy, where she helps develop event programming that cultivates the careers of Bay Area music makers.